As artificial intelligence continues to reshape modern business models, organizations across every sector are racing to integrate the latest generative tools to secure a competitive edge. Yet, beneath the relentless pursuit of smarter algorithms and massive datasets lies an overlooked physical reality. We are fast approaching a critical intersection where digital innovation collides with physical infrastructure and corporate sustainability commitments. The next frontier of the AI revolution isn’t just about writing better software—it is about securing the immense power required to run it.

1. The Core Problem: A Shift from Algorithms to Energy

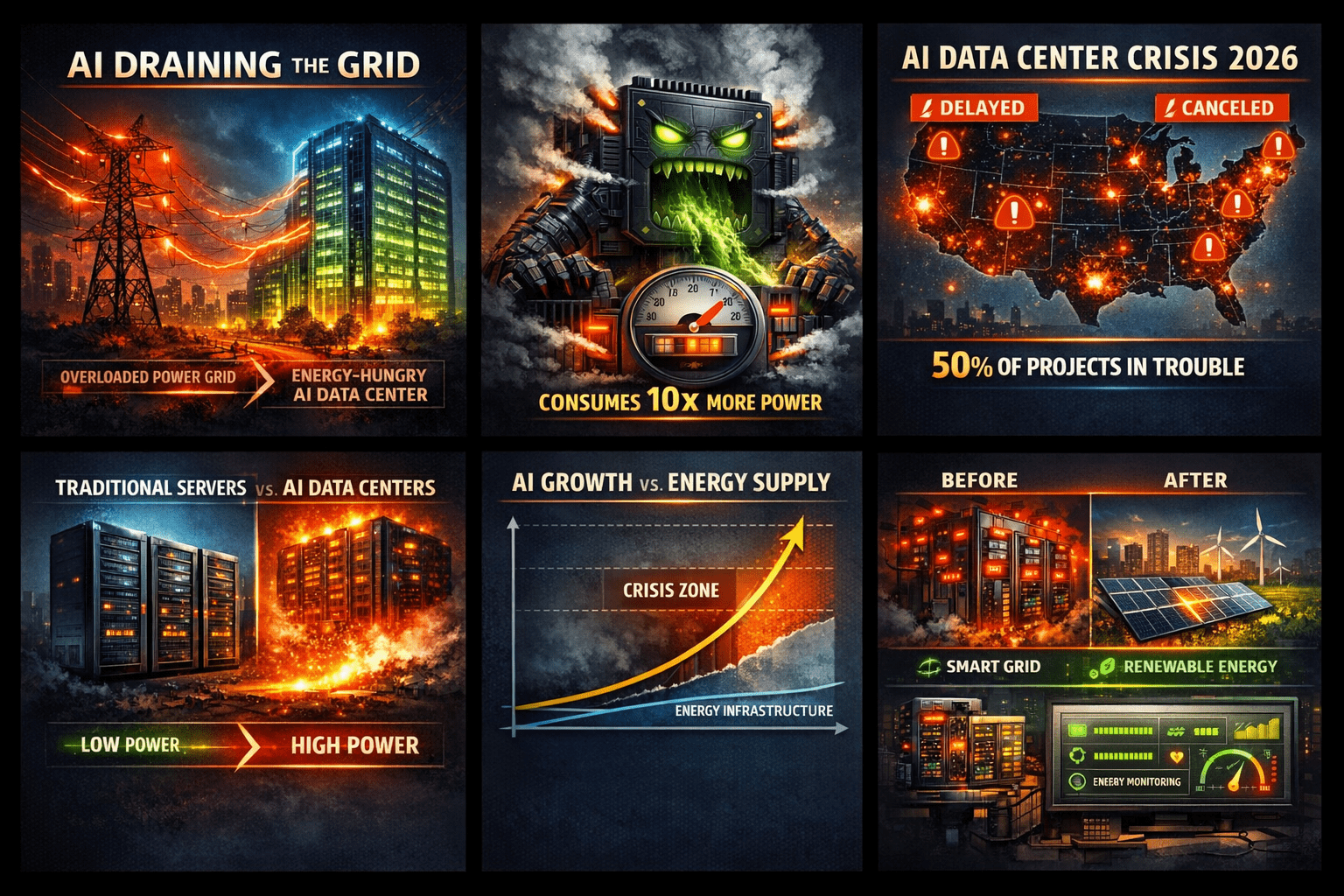

For years, the artificial intelligence narrative has been dominated by algorithms, model parameters, and data availability. However, a new, critical constraint is emerging: AI is no longer limited by intelligence; it is limited by energy. Modern AI systems, particularly large-scale models, demand staggering computational power. Consequently, data centers optimized for AI workloads consume up to 10 times more electricity than traditional computing infrastructure.

2. A Growing Infrastructure Crisis

Industry estimates warn that by 2026, up to 50% of planned AI data centers in the U.S. could face delays or cancellations. The primary hurdle isn’t a lack of capital or technological capability, but rather insufficient power supply and grid capacity. This highlights a stark imbalance: while AI demand is scaling exponentially, energy infrastructure is expanding linearly. This mismatch is creating a major bottleneck that threatens to decelerate global AI deployment.

3. What Drives AI’s Massive Energy Consumption?

Three primary factors contribute to this unprecedented power surge:

-

-

-

Massive Computational Requirements: Today’s models operate with billions or even trillions of parameters, requiring continuous, high-intensity processing for both training and inference.

-

Specialized Hardware: The advanced GPUs and TPUs powering these workloads are inherently energy-intensive, especially when deployed at massive scale.

-

Real-Time Processing Demands: Modern AI applications require ultra-low latency and instant responses, forcing hardware to run at peak capacity 24/7.

-

-

4. The ESG Imperative: Beyond Operational Costs

The AI energy crisis extends far beyond rising operational expenditures (OpEx). It introduces profound structural and environmental risks:

-

-

-

Stalling the deployment of large-scale AI infrastructure.

-

Placing unprecedented strain on aging national power grids.

- Driving up carbon emissions, which directly threatens corporate Net Zero commitments and undermines broader ESG (Environmental, Social, and Governance) goals.

-

-

Without solving the energy equation, AI risks stalling its own momentum.

5. The Solution: Intelligent and Sustainable Ecosystems

To overcome these growing pains, the industry must pivot toward comprehensive, tech-driven energy management. Key enablers include:

-

-

IoT-Driven Energy Monitoring: Utilizing the Internet of Things (IoT) to track power consumption at a granular level, instantly detect inefficiencies, and optimize data center performance in real-time.

-

Smart Grid Technology: Enabling dynamic load distribution, seamlessly integrating renewable energy sources, and alleviating pressure on legacy infrastructure.

-

Hardware and Algorithmic Innovation: Transitioning toward highly targeted Small Language Models (SLMs) and adopting advanced infrastructure, such as liquid cooling systems, to drastically reduce power overhead.

-

6. The Strategic Opportunity: The True AI Race

While the mainstream focus remains on building “smarter” models, the true competitive advantage of the next decade lies elsewhere: energy optimization. Organizations that successfully improve energy efficiency, deploy intelligent management systems, and align AI operations with sustainable power will do more than just cut costs. They will dictate the pace of the next technological frontier.

7. Conclusion

We are entering an era where AI’s evolution is constrained not by its code, but by its physical footprint. The data center energy squeeze is not a distant forecast; it is a current reality. In this new landscape, pioneering sustainable energy infrastructure is not just an operational necessity—it is the very foundation of AI’s future.